New to AirTable - two questions

I'm wondering if this platform offers app creation (front end GUI).

- 6 Views

- 0 replies

- 0 kudos

喙 Exciting news for healthcare providers and organizations! Airtable is now HIPAA compliant and ready to support your critical work. We understand the importance of protecting sensitive patient dat...

Announcements

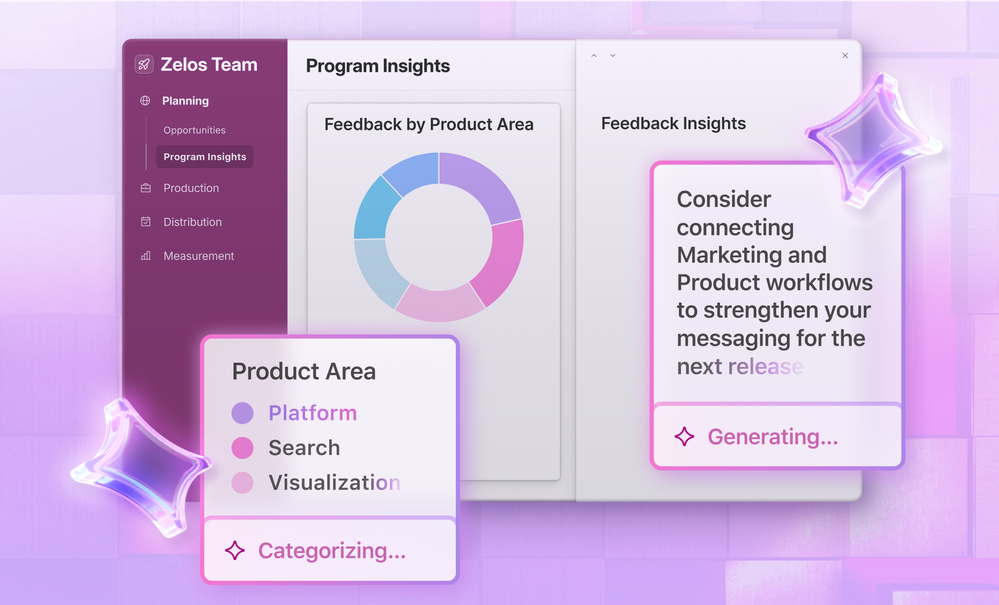

We're thrilled to announce the release of Airtable AI, a set of features with the potential to transform your workflows! These features allow you to embed generative AI capabilities directly within yo...

Announcements

Hello, hello! Welcome to this special edition of our Top Contributors post, covering both January and February! Okay I admit it, I got a tad behind on these posts, but that just means double the fun a...

Announcements

Hi everyone, I’m Carla – a software engineer at Airtable and I am really happy to share that we’ve added the ability to dynamically filter results in linked record pickers! You can now limit the recor...

Announcements