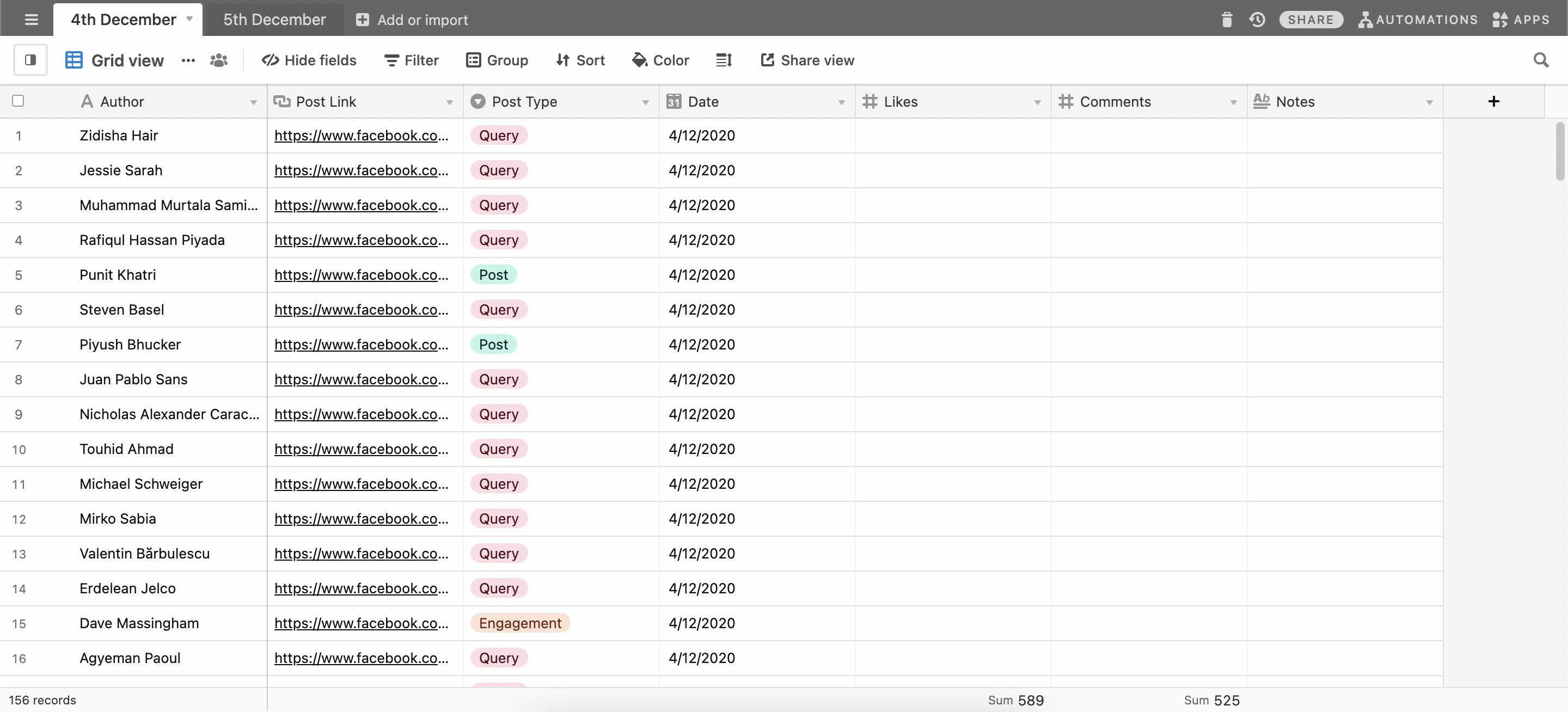

Hello, I am trying to build a content tracker and there are going to be hundreds of entries every day.

I will be creating views where people can filter post type with date.

For Ex: I want to see “query” posted on 4th December.

I want to see “post” posted between 4th December - 7th December.

Should I create a new base for each date or should I continue updating the same base with hundreds of thousands of entries?

Or is there a better way to do this that I am totally missing out on?

Thanks