- Airtable Community

- Discussions

- Development & APIs

- How to query a table ... or do I always have to pr...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

How to query a table ... or do I always have to process all records?

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

May 18, 2020 08:00 AM

In the API definition for RecordQueryResult it says

A RecordQueryResult represents a set of records. It’s a little bit like a one-off Airtable view: it contains a bunch of records, filtered to a useful subset of the records in the table.

but in the API for Table there’s no way I see to filter when calling selectRecordsAsync(). Is there no way to query only a subset of rows in the table, i.e. the equivalent of a SQL WHERE clause? If so, what is the RecordQueryResult documentation referring to regarding ‘filtered to a useful subset’?

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sep 13, 2021 12:48 PM

In some select cases it is not necessary for the script writer to iterate across all 45,000 records to get the record in table b. (It is still necessary to retrieve/select all the records in table b).

Scenario 1: The record(s) in table b are linked to the record in table a. You can get the value of the linked record field in table a, and then use those getRecord() on the query results with the record id(s) from the linked record field.

Scenario 2: The record in table b has a specific value in a specific field that needs to match the record in table a, other than the record id. When selecting the records with selectRecordsAsync, you can specify a sort, which will help float the desired record to the top of the array. Then the script can do a find on the query result records. Due to the sort, assuming that the record actually exists, the first match will probably be found before iterating through all of the records.

Of course, if you need to find all of the matching records, it will still be necessary to iterate through all the records.

These techniques do not eliminate the usefulness of Bill’s techniques. However, it can provide some slight performance improvements versus iterating through all records in these select situations. In fact, by specifying the sort, I’m guessing that we are taking advantage of some hidden indexing that Airtable has behind the scenes.

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sep 13, 2021 03:09 PM

Hmmm… that’s fine, we can safely agree that unless it happens to be the last record, it’s not necessary to iterate across the entire table. But that’s not the issue; rather, it’s the hit of performing a selectRecordsAsync() at all - this is the performance bottleneck if hyper-performance is crucial. And given the limited constraints especially in script automation - this is an increasingly constraining climate. How will selectRecordsAsync() perform with 100,000 records? 250,000 records?

Your comment is not debatable setting aside the requirement I laid out - which is to establish the link, not retrieve the linked relationship. The linkage from (a) to (b) does not exist; it needs to be created. How would you do that programmatically without discovering the record ID via some sort of filtering query?

Imagine a table (a) with a view and when a record enters that view, search another table (b) that has 45,000 records in it for a single matching key so that a linkage can be established in (a) to (b).

Answer? – cache-forward the findability mechanism that changes the dynamic entirely using an approach that will work with 20,000 records or 2 million records.

Yep - I played with this approach many times and there are two huge issues;

-

You must repeat the sort for every filtering task where arbitrary key values must be discovered independently of each other. Floating exactly what you want to the top is difficult in most cases.

-

My tests indicate that sort adds a serious hit to the performance.

I don’t employ this approach for “slight improvements”; these are hyper-performant results comparatively. In the worst case, it is about 6 times faster for a single link assertion. If you need to make a thousand assertions, it can grow to hundreds of times faster. Certainly, less complex approaches also benefit from an increase in the number of lookups/assertions, but I suspect this is not true at increasing scale.

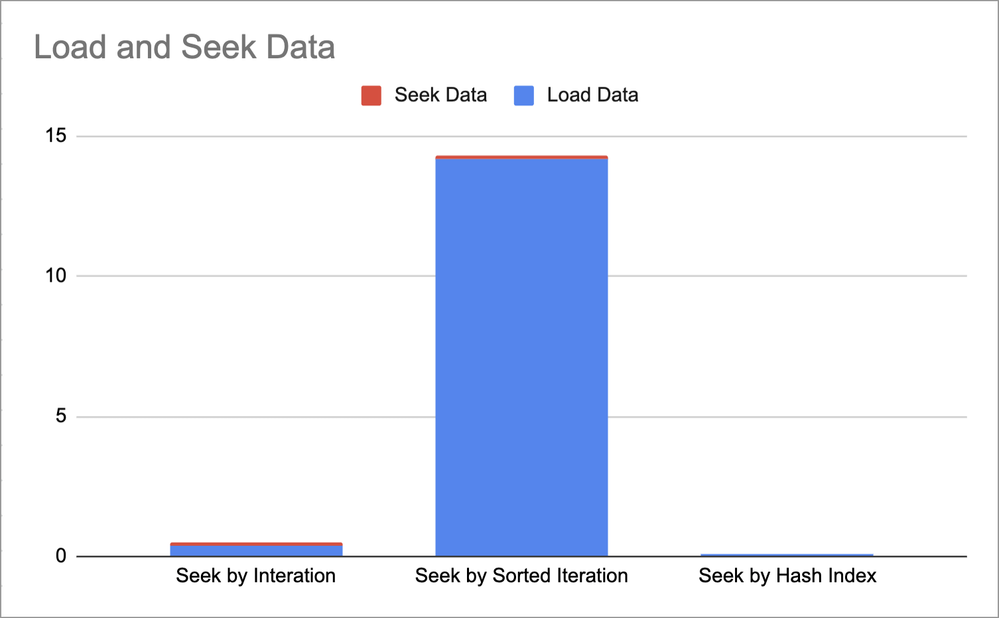

Hmmm, I see no advantage to sorting purely for purposes of lookups the likes of which align with the scenario I suggested. Indeed, while the seek time does fall by about ten per cent, the load time skyrockets by more than 30 times with a sort. And this is just for 20,000 records. I don’t see any case where this is a better approach with a significant number of records.

My conclusion - however biased it may seem - is that it makes sense to endure the added development complexities if hyper-findability at scale matters. Furthermore, hyper-performance seems to be increasingly required in Airtable because the platform is struggling to sustain automation processes as it is, and more developers are discovering their code designs are timing out more frequently.

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sep 13, 2021 06:22 PM

I’ll totally agree with you there. Having to retrieve all of the records in the first place, no matter how many records you actually want is a pretty big hammer for a tiny thumbtack.

I was only tying to address the tiny bit about having to iterate through all the records being the only way.

There also is no question that hash indexes are faster than iterative search.

I wonder for the type of hyper-performance that you want, why is this data stored in Airtable in the first place? I guess because someone wants it in Airtable.

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sep 13, 2021 10:14 PM

Don’t think it’s been mentioned here by name yet, so just in case someone’s still fishing for ideas and gets easily scared by walls of text: memoization is the term to Google. It’s guaranteed to do the job regardless of the dataset size and lazy getters are trivial to write in JS due to the play-it-by-ear typing. Ironically, the ES6 “class” syntax is (I think) the most direct way of creating them.

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sep 13, 2021 11:05 PM

usually i use something like

let rhActive=new Map(active.map(rec=>([rec.getCellValue(‘field’),rec.id])));

for that purpose.

Also, since linked records work like ‘join tables’ and if the application needs multiple operations depending on some matching field between A and B, you may add this field(B) as a lookup field in table(A) and don’t need to query B at all. (That should work like when sometimes you add missing field in a composite index on SQL server and CPU load drops from 100 to 3-5%)

In your example, load time is the biggest value. But that’s the sinlge operation, you load all set one time in a script and then process it on your side.

I can’t be sure, but suppose - many script writers, not being DB experts, often trying to set updateRecordAsync (or createRecordAsync) on a loop instead of using batch operations. I did the same, when I learn scripting here.

If Airtables adds ‘load single record by filter’ function, that will result in a multiple single loads in a script run :grinning:

About 2 millions of records - doubt that they have such plans, maybe for some dedicated large Enterprise.

and that can be partitioned by multiple views (or even multiple tables with current limits)

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sep 14, 2021 03:56 AM

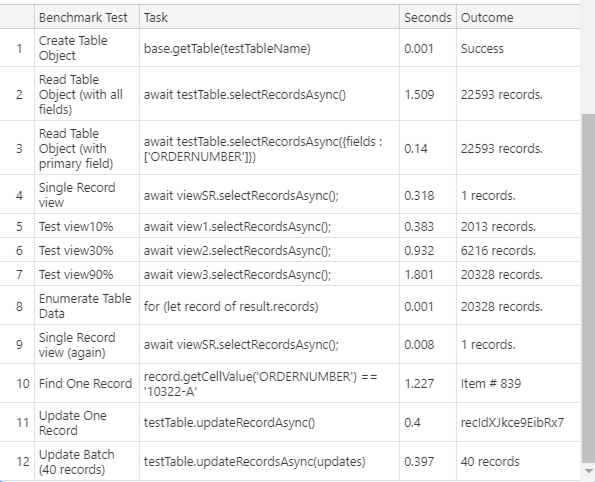

By way, that’s very interesting perfomance info, and following discussion links i encountered your base with benchmark script. I added more tests, just for curiosity, maybe that info is already known but anyway, here is the results. Percents in views are approximate, i just used random filtering to fit close

.

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sep 14, 2021 05:28 AM

LOL! Why is any data stored in Airtable? It’s a complicated question whose answer spans many aspects of data management, users experience, and business requirements.

But to be clear, my approach was not fabricated as a result of a single system or one data set; it’s an ongoing attempt to overcome many issues that arise when using Airtable in more rigid processes and/or at greater scale.

Unfortunately, discussions about performance and best practices cannot readily factor in how busy a given instance of Airtable is. We all know that any given instance of a base is like a container at the server level and such container is granted a fixed amount resources; enterprise accounts have more and free accounts have less. But even an enterprise account can be brought to its knees with lots of automations, external API use, and external automations from Zapier and Integromat.

As such, when we test various patterns and performance characteristics in our quaint little pro accounts and feel all worm and fuzzy, we are simply engaging in confirmation biases that are likely to be misleading when applied in real conditions.

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sep 14, 2021 06:45 AM

Yep - this is a very good point that I should have explored. It is roughly 9.1% faster when it comes to establishing and seeking a specific id from the record set. It’s a wise enhancement.

Despite all of my words and attempts to be clear about the requirements in my initial scenario, I get the sense that I’m not making it fully apparent the issues that some processes face. Earlier, @kuovonne said this and it seems to be what you are saying.

Your comment makes sense [to me] if the link is already established. But what if the relationship does not exist and needs to now be asserted [programmatically] in an ad-hoc fashion as a result of other workflow process outcomes? In some business requirements, relationships between records are not established until other events in the process occur. And, there are also cases where relationships must change.

Am I correct in understanding that some iterative process must locate b.record as it relates to a.record in order for the desired link to be established? Or, am I missing some magical approach or data model that escapes me and seemingly the vast Airtable user community? I’m old - I miss a lot of obvious methods to create better systems. :winking_face:

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sep 14, 2021 07:05 AM

I think that it is clear now. In my initial reply (about a tiny detail that I am now thinking would have been better left alone) I included two scenarios. I believe my second scenario is what you want. I included my first scenario because it is such a common use case that also requires retrieving all of the records, and I used to use iteration before I discovered the getRecord function.

I don’t think you miss much.

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sep 14, 2021 08:25 AM

That’s kind. I assure you - I miss a lot from time-to-time. But most important, by definition, I generally don’t have a sense for what I miss. :slightly_smiling_face: I need others to tell me.