- Airtable Community

- Discussions

- Ask A Question

- Automations

- Re: Writes from Automation script very slow

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Re: Writes from Automation script very slow

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sep 08, 2022 01:20 PM

Hi all! I am writing an automation script to sync data from an external API into Airtable. The table in question has 2,051 records and I’m now hitting the 30s run time limit.

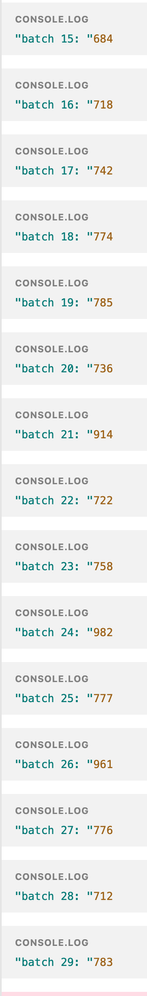

I’ve done all I can think of to optimize performance, I’m already batching the writes in chunks of 50 and using table.updateRecordsAsync. After adding some logging I confirmed that the batches are taking a whopping 750+ms to process — which, for 41 batches, takes me over the time limit. :exploding_head:

Isn’t it crazy that a simple write (I am just copying the values over 1:1, there are no formula or rollup fields) that would take <5ms in a normal database takes so much time in Airtable? Especially considering it is initiated from a script running on their architecture?

Is there anything I can do to further optimize performance? As it is now we are unable to use this which is unfortunate :confused:

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sep 08, 2022 02:02 PM

Welcome to the Airtable Community!

You can play around with how you await the promises to send up to 15 batches of 50 per second. This gets you close to 750 records per second. I call this technique using mega-batches.

You can also check to see if some records do not actually need updating. Then omit those records from your array of updates.

If these options still don’t work, you can split your script across multiple scripting actions. Each scripting action gets its own set of 30 seconds.

If none of those options work, you can see if you can move the script to run from a button click in Scripting Extension. Scripting extension doesn’t have the 30 second execution time limitation.

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sep 09, 2022 01:30 AM

Thanks for these pointers! I’ve tried parallelizing these async calls to do ‘mega-batches’, but I found that it makes no difference in how Airtable processes them. For example, compare these two loops (for reference, mstart is the script start time, and create is a Map of records that need to be created — I use a Map to prevent double creations for records referenced multiple times):

for (let i = 0; i < create.size; i += batch) {

let start = new Date().getTime()

console.log(`create batch ${i / batch} start: ${(new Date().getTime() - mstart)/1000}`)

const values = Array.from(create.values()).slice(i, i + batch)

await table.createRecordsAsync(values)

console.log(`create batch ${i / batch} done: ${(new Date().getTime() - mstart)/1000} (${new Date().getTime() - start}ms)`)

}

This gives the following output:

create batch 0 start: 4.525

create batch 0 done: 6.16 (1635ms)

create batch 1 start: 6.16

create batch 1 done: 7.025 (865ms)

create batch 2 start: 7.04

create batch 2 done: 7.841 (801ms)

create batch 3 start: 7.841

create batch 3 done: 8.807 (966ms)

...

When I change that loop to one that does not await the create (a naive implementation of mega-batches):

for (let i = 0; i < create.size; i += batch) {

let start = new Date().getTime()

console.log(`create batch ${i / batch} start: ${(new Date().getTime() - mstart)/1000}`)

const values = Array.from(create.values()).slice(i, i + batch)

table.createRecordsAsync(values).then( () => {

console.log(`create batch ${i / batch} done: ${(new Date().getTime() - mstart)/1000} (${new Date().getTime() - start}ms)`)

})

}

You can see that although the creates are started almost simultaneously, the seem to be throttled internally to one at a time and they still take almost exactly the same amount of time to process per batch:

create batch 0 start: 3.722

create batch 1 start: 3.76

create batch 2 start: 3.837

create batch 3 start: 3.841

...

create batch 0 done: 4.84 (1118ms)

create batch 1 done: 6.364 (2604ms)

create batch 2 done: 7.378 (3541ms)

create batch 3 done: 8.347 (4506ms)

(note that the ms count is not a reflection of the batch processing duration anymore in this case, but the end time compared to the end time of the previous batch is).

Any thoughts, is there something I’m doing wrong here?

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sep 09, 2022 04:30 AM

This was actually a good suggestion. :pray: I am running some diffing code now before batching the records, and that indirectly solves the issue, as even if the script times out the next run will ignore the already-processed records and pick up where the previous one left off. So eventually it will process all records, as long as it does not receive 1500+ new changes every hour. Though it would be great to find a way to improve performance still.

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sep 09, 2022 05:24 AM

You cannot control how long Airtable takes to process the requests. How long Airtable takes to process the request depends on the request type, the structure of the base, and whatever else the server is doing. For example, deleting records is faster than creating or updating records where there are a lot of complex rollups with lots of linked records and formula fields.

You can only control how quickly you submit the request, and submitting up to 15 requests in parallel will get the requests submitted faster than awaiting each request. If you might have more than 15 requests, you will still need code to slow down the rate at which you submit the requests.