One of the most interesting parts of leading the Airtable AI User Group is sitting at the intersection of three very different perspectives:

• What Airtable is seeing internally across enablement, product evolution, and enterprise adoption

• What we’re seeing as Partners inside real operational environments

• What the broader community actually needs to understand to make AI useful in practice

That synthesis is what shaped this month’s session.

Last month, I hosted a conversation with Anna Rigby around the Airtable <> Claude connection and how prompting is evolving as AI shifts from simple chat interactions into workflows, automation, and agents.

As those conversations have continued, I wanted to intentionally bring together voices from different parts of the ecosystem because successful AI adoption is not happening in silos.

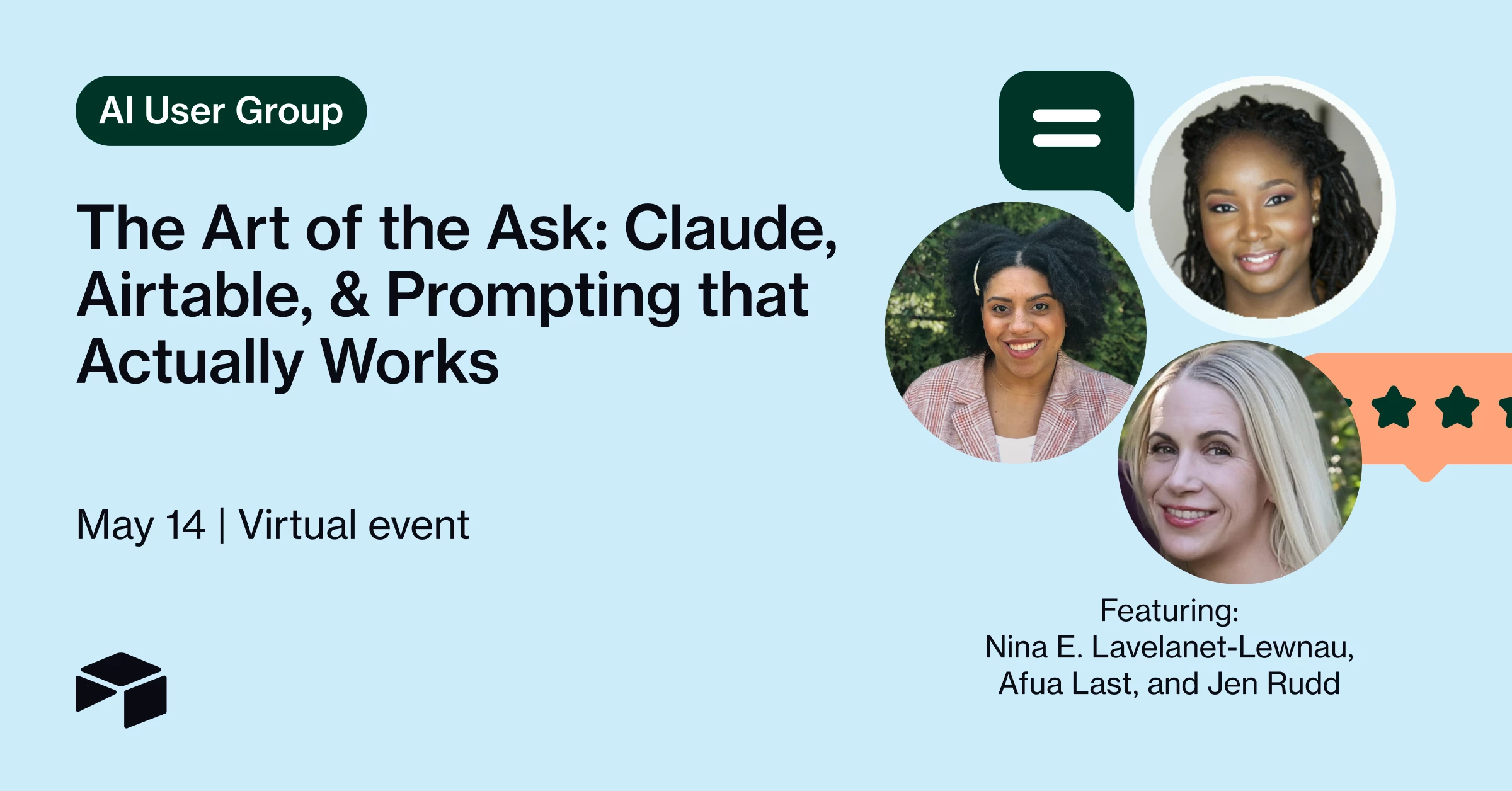

That’s why I’m excited to be joined by:

- Afua Laast, Airtable’s Lead Trainer, sharing what’s emerging across enablement and prompting best practices

- Nina Levalanet-Lewnau, Airtable’s Enablement Manager, discussing how organizations operationalize and scale these capabilities internally

What I appreciate most about this conversation is that it reflects how enterprise transformation is actually happening right now: through close collaboration between platform teams, enablement leaders, and strategic partners working directly alongside customers.

The strongest partnerships extend beyond implementation alone. They help organizations translate platform capabilities into operational outcomes by connecting strategy, enablement, workflow design, adoption, and execution.

As Partners, we have a unique vantage point. We see what Airtable is building and enabling internally, what customers are struggling to operationalize in practice, and what patterns are emerging across real-world implementations. Bringing those perspectives together and translating them into something actionable for the broader community is a big part of why I wanted to host this session.

That’s the lens we’ll be bringing into the discussion.

We’ll be covering:

• Why prompting matters more in the age of agents

• What’s actually working in enterprise AI adoption

• Practical reliability tactics for AI workflows

• Airtable + Claude in operational environments

• Where implementation, enablement, and community learning intersect

If you’re trying to move beyond AI hype and better understand what operational AI adoption actually looks like inside organizations, I’d love to have you join us.

May 14th at 2PM ET

[See the recap and recording below!)

#AI #Airtable #ClaudeAI #EnterpriseAI #PromptEngineering #AIWorkflows #Automation #DigitalTransformation #Operations #ChangeManagement